AI Upskilling for HIPAA Compliance: What to Know

Healthcare Technology

Updated Mar 28, 2026

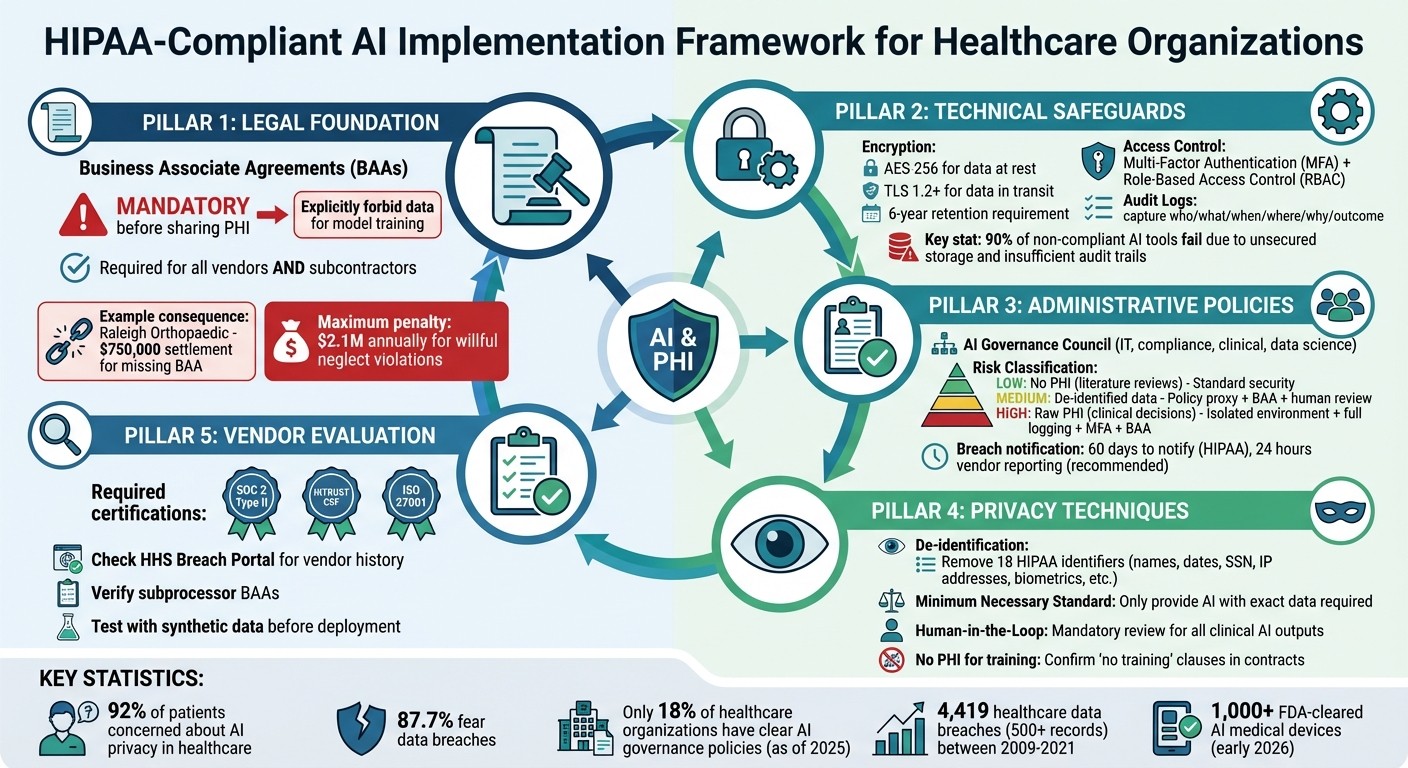

Practical HIPAA guidance for using AI with patient data: BAAs, encryption, access controls, logging, de-identification, and staff training.

AI is transforming healthcare, but using it with patient data comes with risks. If you're in healthcare, here's what you need to know about staying HIPAA-compliant with AI tools:

HIPAA Rules Apply to AI: Privacy, Security, and Breach Notification rules require strict safeguards for patient data. This includes encryption, access control, and audit logging.

Risks to Watch: AI tools can memorize sensitive data, be tricked into revealing PHI, or expose data if used without proper agreements. Using tools like ChatGPT without safeguards is a common issue.

Business Associate Agreements (BAAs): Before sharing patient data with an AI vendor, ensure a signed BAA is in place. It should explicitly forbid using data for training models.

Technical Safeguards: Use encryption (AES-256), secure data transmission (TLS 1.2+), and strict access controls. Log every interaction involving patient data.

Staff Training: Tailor training to roles - clinicians, IT, and compliance teams need to understand their responsibilities in protecting PHI.

Vendor Evaluation: Check vendors' security certifications, breach history, and data handling policies. Avoid consumer-grade AI tools for sensitive tasks.

Staying compliant isn't just about avoiding fines (up to $2.1M annually for violations) - it's about protecting patient trust. Clear policies, strong safeguards, and ongoing training are key.

HIPAA-Compliant AI Implementation Framework for Healthcare Organizations

Core HIPAA Principles for AI Training Programs

HIPAA Rules That Apply to AI Systems

When AI systems handle patient data, three main HIPAA rules come into play. First, The Privacy Rule establishes national standards for using and disclosing Protected Health Information (PHI). A key part of this rule is the "Minimum Necessary" standard, which means staff should only provide AI tools with the exact data required for a specific task - nothing extra [8][1]. Second, The Security Rule requires safeguards - administrative, physical, and technical - to protect electronic PHI (ePHI). This includes measures like AES-256 encryption, secure transmission protocols like TLS 1.2 or higher, role-based access controls, and multi-factor authentication (MFA) [8][11][4]. Lastly, The Breach Notification Rule obligates organizations to notify affected individuals and the Department of Health and Human Services within 60 days if unsecured PHI is compromised [8][10][4].

The Omnibus Rule extends these requirements to Business Associates, including AI vendors, and even their subcontractors [8]. This means that if an AI vendor mishandles patient data, they are held just as accountable as the healthcare organization itself. To meet HIPAA's standards for "de-identified" data, 18 specific identifiers - such as names, biometric data, and IP addresses - must be removed [7][4].

Grasping these rules is vital to understanding how AI systems could be vulnerable to specific risks.

Common Compliance Risks in AI Workflows

AI systems bring challenges that traditional healthcare workflows don’t encounter. One issue is model memorization, where large language models inadvertently reproduce PHI from their training data. If patient details appear in an AI’s output, it constitutes a breach [4]. Another concern is prompt injection, where malicious inputs trick an AI system into revealing PHI stored in its context window [5]. Between 2009 and 2021, there were 4,419 healthcare data breaches involving 500 or more records [8], and improperly managed AI can exacerbate these risks.

Shadow AI is another growing concern. This happens when healthcare staff use consumer-grade tools like ChatGPT or Claude to handle clinical notes without a Business Associate Agreement (BAA), potentially leading to unauthorized PHI disclosures [4]. Additionally, many organizations fail to log the content of prompts and responses, tracking only who accessed the AI system. This creates incomplete audit trails [4][12]. Vendor management can also fall short when organizations assume a general cloud BAA covers specific AI services, which is often not the case [5].

"If an attacker can manipulate your AI agent into revealing PHI from its context window, that is a data breach under HIPAA." - BeyondScale Team [5]

Effectively addressing these risks requires strong legal agreements and a clear understanding of staff responsibilities.

Business Associate Agreements (BAAs) and Staff Responsibilities

BAAs and well-defined staff roles are essential for enforcing HIPAA principles. Before sharing PHI with an AI vendor, a signed BAA is mandatory [14][1]. Healthcare providers must ensure this agreement is in place, while AI vendors are responsible for maintaining compliance and securing BAAs with any subcontractors [1][4]. For example, Raleigh Orthopaedic faced a $750,000 settlement with the Office for Civil Rights after disclosing the ePHI of approximately 17,000 patients to a vendor without a signed BAA [11].

Traditional BAAs often fall short of addressing AI-specific scenarios, such as the use of patient data for model training or how data is stored in model weights [1][4]. Organizations need to confirm that their BAAs explicitly prohibit vendors from using uploaded PHI to train or enhance their models [4][12]. While leadership is responsible for securing these agreements, staff must follow the established guidelines and avoid using uncertified consumer AI tools [1][5].

"If a potential AI vendor is unwilling to sign a BAA, the conversation is over." - The Prosper Team, Prosper AI [14]

To ensure compliance, organizations should appoint designated Security and Privacy Officers to oversee these agreements and policies [13][10]. With the maximum annual penalty for HIPAA violations set to reach $2.1 million in 2025 [4], failing to secure a BAA is a serious issue - even if no data breach occurs [14].

HIPAA and AI: What Healthcare Needs to Know in 2025 | InfoSec Battlefield Ep. 5

Technical Safeguards for AI Systems Handling PHI

The HIPAA Security Rule lays out specific technical measures to safeguard electronic Protected Health Information (PHI) in AI systems. These measures are essential for ensuring compliance and must be understood by everyone involved with patient data - from IT teams to clinicians using AI tools.

Encryption and Secure Data Transmission

Encryption is a cornerstone of protecting PHI. For data at rest, AES-256 encryption is the industry standard for securing PHI in databases, file storage, caches, model artifacts, vector indexes, and logs. For data in transit, use TLS 1.2 at minimum, though TLS 1.3 is preferred, to secure API communications, ETL jobs, and model-to-database interactions. For data in use, secure enclaves and memory protection mechanisms ensure PHI remains safe during active processing.

Sensitive fields in prompts, retrieval stores, and fine-tuning datasets should also be encrypted to minimize exposure in case of a breach. Use hardware security modules (HSMs) or cloud-based key management systems (KMS) for secure key storage, and implement regular key rotation and role segregation for key management duties. To prevent tampering, apply integrity controls like signing and verifying model checkpoints and data manifests.

"The primary threat to protected health information (PHI) isn't 'LLMs in general' but sending identifiers to public, multi-tenant models... where retention, training, and geography may sit outside your control." - Stanislav Ostrovskiy, Partner, Edenlab [16]

Automate CI/CD pipeline scans to block unencrypted data paths. Use short time-to-live (TTL) settings for caches, temporary files, and intermediate outputs to reduce the data footprint. These encryption measures, when paired with HIPAA training protocols, create a strong framework for safeguarding PHI in AI workflows.

Access Control and Multi-Factor Authentication (MFA)

Access to PHI should be tightly controlled, ensuring only authorized personnel can view or modify sensitive data. Multi-Factor Authentication (MFA) is essential for securing AI system access, with phishing-resistant authenticators like FIDO2/WebAuthn preferred over SMS-based codes. For high-risk actions - such as exporting large ePHI datasets or accessing sensitive admin pages - additional MFA verification (step-up MFA) should be required.

Role-Based Access Control (RBAC) enforces the "minimum necessary" standard by assigning permissions to roles (e.g., clinician, data scientist, IT support) instead of individuals. MFA should integrate with Single Sign-On (SSO) or Identity Providers (IdP) to ensure consistent enforcement across cloud admin consoles and AI interfaces. AI system sessions should automatically time out after 5–15 minutes of inactivity, depending on the device's security level - shorter for public kiosks and slightly longer for secure workstations.

Automated Joiner-Mover-Leaver workflows, triggered by HR systems, streamline access provisioning and revocation during role changes or terminations. Quarterly reviews of privileged access and role assignments prevent "permission creep" in AI development environments. Additionally, MFA should protect encryption key management operations, including key creation, usage, and deletion within AI data pipelines.

Audit Logs and Monitoring for HIPAA Compliance

Continuous monitoring is critical for identifying potential breaches. Audit logs provide an immutable record of every interaction involving PHI in AI systems. HIPAA mandates retaining these logs for 6 years [17], capturing six key elements: who (user identity), what (operation/resource accessed), when (timestamp), where (source IP/device ID), why (purpose), and outcome (success or failure).

For AI systems, logging must extend beyond standard access events to include model-to-database calls, prompt inputs, inference endpoints, and every decryption event involving PHI. 90% of non-compliant AI tools fail due to unsecured data storage and insufficient audit trails [18]. Standard database logs alone are inadequate, as users with write access could erase evidence of unauthorized activity.

To ensure log integrity, use SHA-256 hash chaining to link entries, making tampering detectable. Store log archives in "Write-Once-Read-Many" (WORM) systems like AWS S3 Object Lock or Azure Immutable Blob Storage to prevent deletion, even by administrators. For high-volume workflows, Merkle trees enable efficient log verification without needing to check the entire chain.

A policy proxy can add another layer of security by intercepting AI prompts, stripping identifiers, enforcing "minimum necessary" access, and logging the full interaction chain immutably. Regular audits of training and inference logs are essential to identify PHI leakage or unusual access patterns before they escalate into full-blown breaches.

Administrative Policies for HIPAA-Compliant AI Training

Technical safeguards are crucial, but they’re only part of the equation. Strong administrative policies are what ensure consistent AI usage and accountability. Even the most secure AI systems need clear guidelines for user roles, tool usage, and incident response.

Acceptable Use Policies for AI Tools

Consumer-grade AI tools should never be used for Protected Health Information (PHI). These tools often lack Business Associate Agreements (BAAs) and could potentially repurpose input data [16][17]. As Stanislav Ostrovskiy from Edenlab explains:

"The first question isn't which model, but what the model is allowed to see." [16]

To manage AI tool deployment effectively, establish an AI Governance Council. This council should include representatives from IT, compliance, clinical leadership, and data science. Their role is to approve AI use cases and classify them into risk levels:

Low Risk: No PHI exposure (e.g., literature reviews).

Medium Risk: Involves de-identified data or metadata only.

High Risk: Access to raw PHI.

Each classification should have corresponding safeguards. For example, any workload involving PHI should be deployed in isolated, single-tenant environments [16]. Here’s a quick look at how risk levels align with safeguards:

To combat "Shadow AI" (unapproved tools deployed without IT oversight), create formal request channels for new AI tools [3]. Additionally, require human-in-the-loop oversight for all AI-generated outputs before they are used in clinical documentation or shared externally [20].

Incident Response and Breach Notification Procedures

AI systems bring unique breach risks, such as model memorization (where AI reproduces PHI from training data), prompt injection attacks, and unauthorized PHI exposure in inference logs [4][5]. Your incident response plan must address these specific challenges.

BAAs should include vendor notification requirements. While HIPAA allows up to 60 days for breach notification, agreements with AI vendors should mandate reporting security incidents within 24 hours [4][21]. Ensure these agreements also cover all sub-processors [4].

Regular tabletop exercises are essential. These should simulate AI-specific breach scenarios, such as vendor-side data leaks or prompt injection attacks, to test escalation paths and forensic readiness [3][19]. Use "policy-as-code" to automatically flag unusual access patterns or detect when ePHI moves into unapproved AI tools, triggering immediate alerts [19].

Joe Braidwood, CEO of GLACIS, underscores the importance of execution:

"The question isn't whether your AI vendor has policies. It's whether you can prove those policies executed when the plaintiff's attorney asks for evidence during discovery." [4]

Beyond managing incidents, continuous training tailored to roles strengthens compliance and accountability.

Role-Based Training and Accountability

HIPAA training should be customized based on how each role interacts with AI [3]. For example:

Clinicians: Focus on the "minimum necessary" standard and avoiding reintroduction of identifiers in secure communications.

Data and ML Teams: Training on Named Entity Recognition (NER) for de-identification and documenting datasets using model cards [3][5].

Compliance Officers: Emphasis on consent validation and preparing for OCR desk audits [3][6].

Automated training systems can assign micro-lessons when staff behavior poses a specific risk [19]. For AI-assisted documentation, clinicians should review and approve AI-generated notes to maintain a verifiable audit trail [19].

HIPAA mandates retaining all AI-related documentation, including audit logs and training records, for at least six years [17]. Alarmingly, as of 2025, only 18% of healthcare organizations have clear AI governance policies in place [18]. This leaves the majority exposed to potential penalties, which can reach $2.1 million annually for willful neglect violations [4]. These administrative policies are essential for enforcing technical safeguards and maintaining a defensible compliance framework.

Privacy-Preserving Techniques for AI Training Programs

Privacy-preserving techniques are critical for ensuring the protection of Protected Health Information (PHI) in AI training and operations. These methods go beyond administrative safeguards, directly reducing the exposure of sensitive data in AI workflows. With 92% of patients expressing concerns about AI privacy in healthcare and 87.7% fearing breaches [2], adopting these measures is crucial to maintaining both trust and compliance.

De-Identification and Anonymization Best Practices

HIPAA outlines two key methods for de-identification:

Safe Harbor Method: This involves removing 18 specific identifiers, such as names, geographic details smaller than a state, date elements (except the year), Social Security numbers, medical record numbers, email addresses, phone numbers, IP addresses, biometric data, and full-face photographs [9].

Expert Determination Method: A qualified professional uses statistical analysis to ensure the risk of re-identification is minimal and documents the findings [16].

To further protect privacy, adopt a metadata-first design. Instead of using raw PHI, provide AI systems with generalized data, like ICD-10 codes, age ranges, or relevant clinical details. For example, a policy proxy can strip or replace identifiers before data is processed by the AI system [16]. This ensures actual identifiers remain within the organization's secure perimeter.

HIPAA prohibits using PHI to train AI models in public or shared environments. Compliant systems process data in real-time without retaining or learning from PHI [2][16]. To stay compliant, confirm vendor contracts explicitly include "no training" clauses, ensuring your data won't be used to train global models [16]. Always apply de-identification early in the workflow, before any data enters prompts or embeddings [16].

Prompt Engineering to Minimize PHI Exposure

Prompts should be designed to include only the minimum necessary PHI for a specific task [16][14]. Instead of loading an entire patient record, extract only the essential clinical details required.

To further reduce risk:

Use input filtering and redaction tools that scan prompts for sensitive data and automatically redact or tokenize it before reaching the AI model [3].

Configure Retrieval-Augmented Generation (RAG) systems to provide de-identified snippets or metadata instead of entire patient records [16].

Replace free-text identifiers with pseudonyms or generalized data, such as broad location ranges [16][3].

Defend against prompt injection attacks, where users attempt to manipulate the AI into bypassing security protocols. Implement input sanitization and hard-coded system prompts that are resistant to overrides [14]. Post-process outputs to ensure no sensitive identifiers are inadvertently exposed [3]. As Edenlab advises:

"The first question isn't which model, but what the model is allowed to see" [16].

Human Oversight for AI Decisions

Human oversight is essential for reviewing AI-generated outputs [14]. Human-in-the-loop (HITL) systems play a key role in ensuring the accuracy and safety of AI outputs, especially when they impact patient care, billing, or critical clinical decisions [16]. This oversight helps catch "AI hallucinations", where the system generates false or misleading information that could lead to errors in diagnosis or treatment [14].

Regulations increasingly classify clinical AI as "high-risk", requiring explicit human oversight and robust risk management systems [17][16]. The level of oversight should match the task's risk. For example, administrative tasks may need periodic audits, while clinical decision-making requires mandatory human validation before any action is taken [16].

Maintain immutable audit trails to document every instance of human review, modification, or rejection of AI outputs. HIPAA mandates retaining these logs for six years [17][15]. As Joe Braidwood, CEO of GLACIS, points out:

"The question isn't whether your AI vendor has policies. It's whether you can prove those policies executed when the plaintiff's attorney asks for evidence during discovery" [17].

How to Evaluate AI Vendors for HIPAA Compliance

Selecting the right AI vendor for healthcare workflows isn't just a technical decision - it’s a legal one. If a vendor mishandles Protected Health Information (PHI), your organization could share responsibility. That’s why a careful evaluation is non-negotiable before sharing any sensitive data.

Vendor Compliance Certifications and BAAs

Before sharing PHI, confirm that the vendor provides a signed Business Associate Agreement (BAA). This agreement must explicitly forbid PHI use for model training, outline how data is handled in model weights, and address risks related to re-identification [1]. As ConfideAI states:

"Without a BAA, sharing PHI with an AI vendor is a HIPAA violation. Full stop. It does not matter how good their encryption is... if there is no BAA, you are not covered" [23].

Since the government doesn’t issue official "HIPAA certifications", ask for third-party security attestations, such as SOC 2 Type II, HITRUST CSF, or ISO 27001, to gauge the vendor’s security measures [22]. Additionally, request a compliance packet that includes the signed BAA, SOC 2 Type II reports, a list of subprocessors, and a summary of recent penetration tests [22]. The subprocessor list is especially important - every third party involved in processing PHI must also have a BAA in place [1].

Take it a step further by reviewing the vendor’s past practices in data handling and their record of responding to security incidents.

Security Incident History and Data Handling Practices

Investigate the vendor’s breach history by checking the HHS Office for Civil Rights Breach Portal (known as the "Wall of Shame") for any incidents affecting 500 or more individuals [1]. Also, evaluate their data retention and deletion policies, particularly for PHI stored in model weights after the contract ends [1].

A robust vendor will maintain tamper-evident audit logs that track PHI access, processing details, and output destinations. These logs should be stored in a tamper-proof system for the required retention period [1]. Ask if their logging systems include layers to scrub PHI, reducing the risk of accidental exposure [5]. To test their readiness, simulate a breach scenario, such as a prompt injection attack that triggers PHI disclosure, and assess their response [1].

Clinical Validation and AI Model Monitoring

Beyond compliance and security, the vendor’s clinical performance monitoring is crucial. AI systems used in healthcare must undergo constant evaluation to prevent errors like "hallucinations" and maintain accuracy [14]. The FDA has already cleared over 1,000 AI-enabled medical devices for use in the U.S. as of early 2026, signaling the growing regulatory focus in this area [1].

Before committing, test the vendor’s platform with synthetic data to verify its accuracy [22]. Ensure they provide documentation of clinical validation and human oversight processes for high-risk applications [14]. It's also critical to confirm that enterprise-grade APIs with BAAs are used, as consumer versions of popular AI tools often lack HIPAA compliance [22].

For organizations exploring AI solutions like those offered by Lead Receipt (https://leadreceipt.com), vendor compliance at every level is essential to safeguarding patient privacy and minimizing legal risks.

Continuous Training and Monitoring for Compliance

Building on technical safeguards and administrative policies, ongoing training and monitoring are critical for maintaining HIPAA compliance in the ever-changing world of AI. Healthcare organizations must regularly refresh training, perform systematic audits, and stay updated on regulatory shifts. Without these efforts, they risk penalties that can climb as high as $1,500,000 annually for willful neglect [1].

Regular Training Refreshers and Competency Assessments

Annual training alone doesn't cut it in AI-driven environments. Instead, organizations should adopt "just-in-time" training, where automated systems deliver role-specific micro-lessons when risky behaviors or policy deviations are detected [19]. This method provides immediate, actionable guidance when staff interact with AI tools in potentially non-compliant ways.

Training should be tailored to specific roles:

Clinicians focus on adhering to "minimum necessary" data use and approved workflows.

Data and machine learning teams need deeper instruction on topics like de-identification, dataset documentation, and safe prompt engineering [3][5].

Quarterly reviews of access permissions and Business Associate Agreement (BAA) inventories are also essential. These reviews ensure that staff roles and vendor relationships remain aligned with compliance requirements [5].

Effective training refreshers can include:

Phishing drills to identify vulnerabilities.

"Prompt-red team" exercises where staff attempt to bypass AI safety filters in controlled settings.

Tabletop breach scenarios focused on AI-specific risks like hallucinations or data leaks [3][1].

All training activities should be documented according to HIPAA retention protocols [3][4]. These efforts naturally feed into broader audit and risk assessment practices.

Audit and Risk Assessment of AI Systems

Any new AI tool used in healthcare requires an updated risk assessment under the HIPAA Security Rule. This process identifies vulnerabilities, such as prompt injection or model inversion attacks [1]. To maintain oversight, organizations should create a detailed inventory of all AI systems used for clinical documentation, billing, and patient triage.

Comprehensive audit logs are another must. These logs should capture every API call, model input, and output, retaining records for at least seven years [6][1]. Regularly reviewing these logs helps identify Protected Health Information (PHI) leaks and ensures "minimum necessary" data filters are functioning as intended [3]. Adding a PHI-scrubbing layer between AI agents and logging systems can further prevent sensitive data from being stored in debugging logs [5].

Organizations should also conduct tabletop drills simulating AI-specific breach scenarios, such as vendor-level data exposure or model inversion attacks [6][3]. As Jennifer Walsh, Chief Compliance Officer at Health1st.ai, puts it:

"AI compliance isn't a blocker - it's a framework for responsible innovation" [6].

These audits and drills provide critical insights for adapting to ongoing regulatory changes.

Adapting to Regulatory Changes and AI Advancements

The regulatory landscape is evolving quickly. New legislation, such as the Colorado AI Act (effective June 2026) and the EU AI Act (August 2026), is shaping compliance frameworks alongside HIPAA [4]. Meanwhile, the HHS Office for Civil Rights is modernizing its enforcement strategies (OCR 2.0) to focus on cloud AI platforms, algorithmic transparency, and targeted desk audits of AI implementations [6].

To stay ahead, organizations must treat BAAs as living documents. Traditional BAA templates often fail to address AI-specific risks, like training models on PHI or data persistence in model weights [1]. Establishing an AI Governance Council - with representatives from clinical, IT, and compliance teams - can help approve new AI use cases and ensure data ingestion complies with "minimum necessary" standards [3].

Workflow automation can also play a key role. For instance, auto-assign role-based training whenever a new policy is introduced or when staff responsibilities change [19]. Regularly reviewing vendor release notes for updates on security fixes and data handling practices ensures internal training remains relevant [3].

For organizations exploring AI tools, like those offered by Lead Receipt (https://leadreceipt.com), it's crucial to have compliance programs that can quickly adapt to both technological advancements and new regulations.

Conclusion: Building a HIPAA-Compliant AI Training Program

Creating a HIPAA-compliant AI training program requires a long-term commitment to structured processes, clear policies, and meticulous vendor evaluations. Joe Braidwood, CEO of GLACIS, sums it up perfectly:

"There is no 'HIPAA certified AI.' HIPAA compliance is not a product attribute - it's an operational state that depends on how AI is deployed, configured, documented, and monitored" [4].

The foundation of compliance begins with a thorough risk analysis. This involves assessing vulnerabilities specific to AI and establishing an AI governance council to oversee use cases and enforce the "minimum necessary" standard for PHI access [3]. Business Associate Agreements (BAAs) should explicitly address key areas like model training permissions, data residency, and sub-processor management [4][1].

Training programs should be tailored to specific roles. Clinicians, for instance, need guidance on workflows and minimal data usage, while data scientists must focus on de-identification techniques and secure prompt engineering [3]. On the technical side, safeguards like AES-256 encryption, multi-factor authentication (MFA), and detailed audit logs that meet six-year retention requirements are essential [4][1].

Human oversight is also critical. While AI can assist with tasks like drafting notes or triaging cases, all clinical decisions must ultimately be reviewed by a human [18]. De-identification proxies can help strip the 18 HIPAA identifiers from data before it is used in AI systems, and consumer-grade AI tools should be avoided unless they meet strict security and compliance standards, including BAAs [4][16].

Finally, combining internal safeguards with thorough vendor assessments strengthens your compliance framework. For healthcare teams using AI-driven tools like those offered by Lead Receipt (https://leadreceipt.com), compliance should be seen as a pathway to responsible innovation rather than a hurdle. With only 18% of healthcare organizations currently having clear AI governance policies [18], investing in structured compliance programs is vital. This not only ensures safe AI implementation but also helps avoid penalties, which can reach up to $2,067,813 annually [4].

FAQs

Can we use AI with PHI without violating HIPAA?

Using Protected Health Information (PHI) to train AI models comes with strict legal requirements under HIPAA. To stay compliant, the data must be fully de-identified and handled under rigorous protocols. Failing to meet these standards can lead to severe legal and financial consequences. Always ensure PHI is managed in line with HIPAA regulations to prevent breaches and avoid penalties.

What must an AI vendor’s BAA include?

A Business Associate Agreement (BAA) with an AI vendor must spell out specific responsibilities for safeguarding protected health information (PHI). It should outline clear protocols for handling, storing, and transmitting PHI, ensuring compliance with HIPAA's Privacy and Security Rules. The agreement should also address measures to prevent breaches or unauthorized access, establishing a framework for accountability.

What should we log for HIPAA when using AI?

When incorporating AI into healthcare, HIPAA mandates meticulous tracking of all interactions with Protected Health Information (PHI). This involves creating and maintaining audit trails that document data access, changes, and disclosures. These logs are crucial for ensuring compliance, identifying potential breaches, and protecting sensitive patient information.